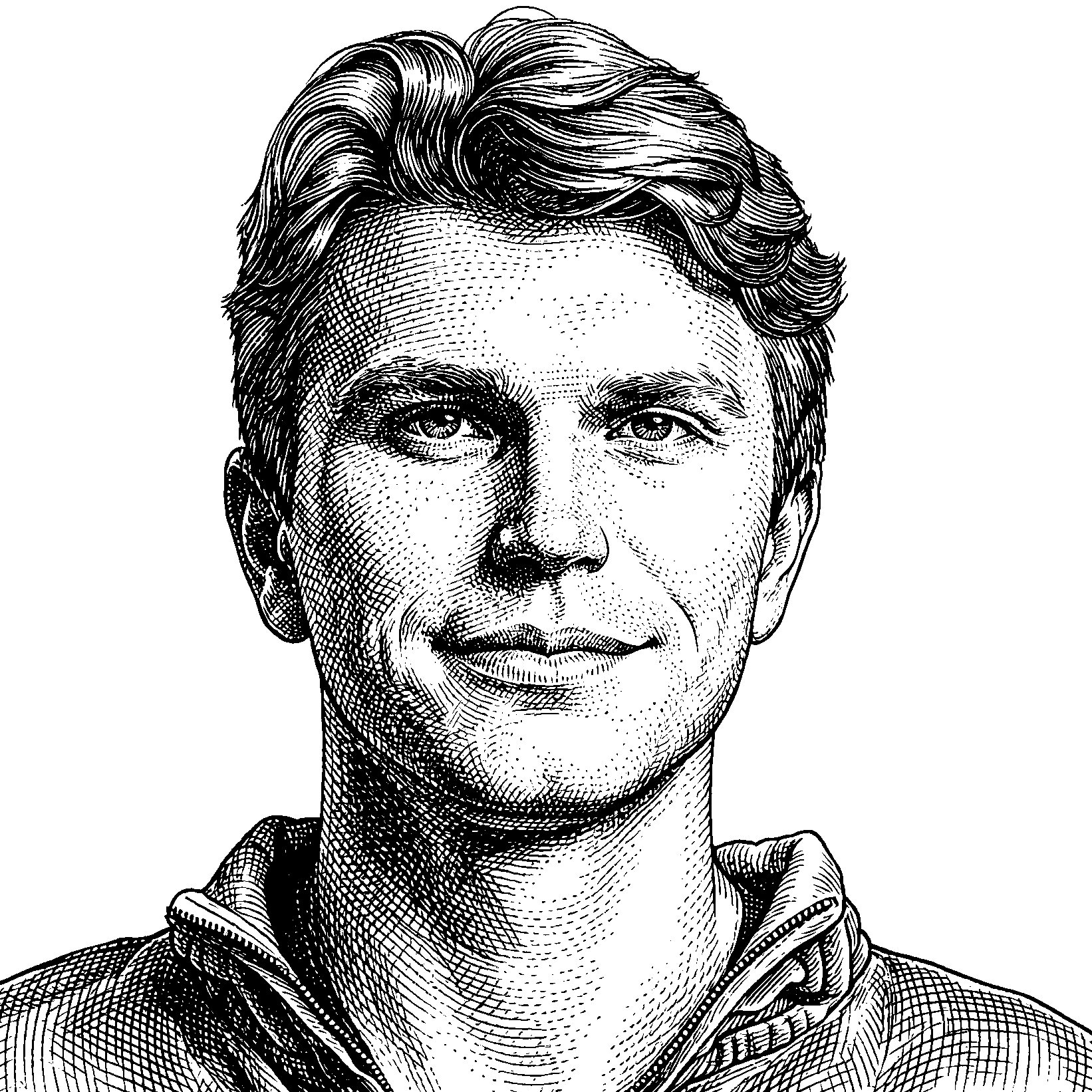

Jeremy Hadfield currently works in Applied AI at Anthropic. He completed his undergraduate studies in philosophy, neuroscience, and computer science, as well as a Master of Engineering Management, at Dartmouth College. His academic interests have long been intertwined with the exploration of consciousness, happiness, and suffering, themes he addresses with a rigorous, multidisciplinary approach.

Previously, as an intern at the Qualia Research Institute (QRI), Jeremy contributed to groundbreaking research focused on quantifying consciousness and developing a consistent, meaningful understanding of valence—the spectrum of experience from happiness to suffering. His work at QRI involved exploring the “symmetry theory of valence,” an innovative framework for understanding these fundamental aspects of human experience.

Jeremy’s past presentation at the MTAConf 2020 demonstrated his unique ability to bridge scientific inquiry with theological considerations. He explored how scriptures address happiness and suffering within the wider context of neuroscience, computer science, and philosophical inquiry.